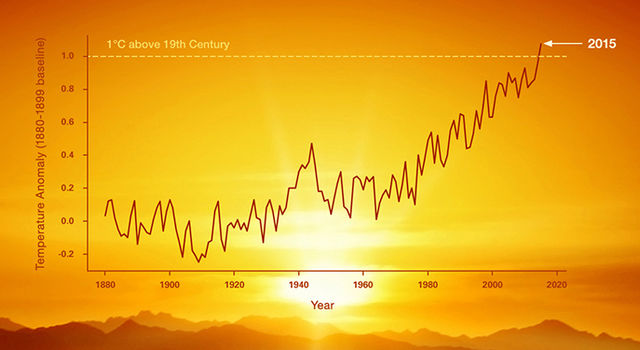

After publishing my last article on the usefulness of climate models, I received some extensive criticism, noting that several definitions and wordings were somewhat blurred. Reason enough, therefore, to devote a little more attention to the topic of climate and complex systems. Complex systems appear in various forms. Be it within engineering sciences to model [...]